A few days ago I published Burla. That post was the public “why now” moment. This one is the more opinionated follow-up.

Toy examples are where mocking libraries look tidy. Real migrations are where they tell the truth. One interface, one return value, one happy path proves almost nothing. The real test starts when you move a real suite, hit async-heavy code, wrestle with ref and out parameters, or ask an AI assistant to help with a large mechanical rewrite.

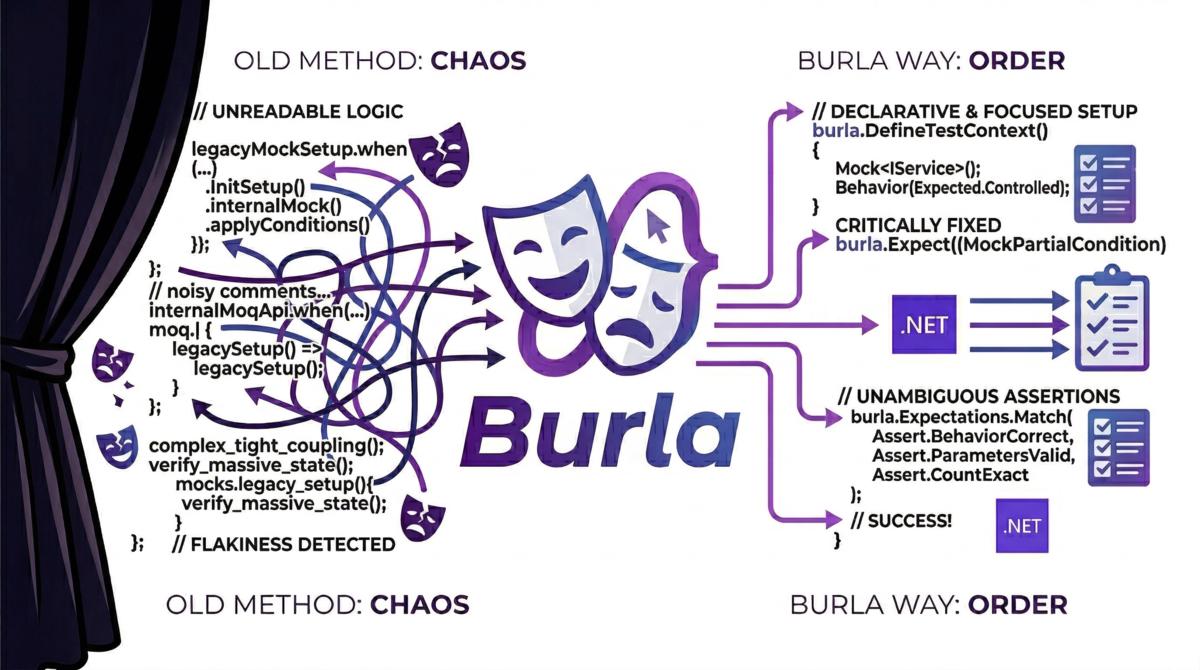

If you have not seen the launch post yet, start with Burla is finally public. The short version is that I wanted a library that stays readable under real pressure, not just in tidy examples.

The hard part is migration#

Maybe you inherited Moq. Maybe your team standardised on NSubstitute years ago. Maybe half the suite was written by different people with different habits. Nearly every mocking library looks competent on the easiest test in the suite. That is not the bar. The question that matters is whether the API still feels sane when you need to move a few hundred or a few thousand tests without turning the whole diff into archaeology.

These are the cases where the trade-offs get loud:

| What bites you | Why it gets expensive |

|---|---|

| Async-heavy tests | Helper methods and odd setup patterns pile up quickly |

ref / out parameters | Syntax gets special-casey fast |

| Verification rituals | Reviewers have to mentally switch assertion styles |

| Inconsistent APIs | Migration tooling and AI assistants make more mistakes |

| Loose defaults | Missing setup quietly hides broken test intent |

That is the gap I wanted Burla to address: a smaller, more explicit API that still holds up when the codebase is messy.

The shape I wanted instead#

My rule of thumb was simple: if a human can understand a test double quickly, an AI assistant is much more likely to generate or migrate it correctly as well.

That pushed the design in a few obvious directions:

- one main setup vocabulary

- short argument matchers

- strict behaviour by default

- async support that feels native instead of bolted on

- call inspection that works with normal assertions

- migration help treated as a first-class feature, not an afterthought

The AI angle matters, but I do not think it is hype. A lot of engineering work now is “change many files safely”. If the library API is full of quirks and exceptions, both humans and LLMs waste energy on framework trivia instead of the test itself. That is a design smell now, not just an annoyance.

What that looks like in practice#

This is the shape I wanted Burla to have in day-to-day tests:

using Burla;

var mock = Mock.Of<ICalculator>();

mock.Setup(x => x.Add(Arg.Any<int>(), Arg.Any<int>()))

.Returns(42);

var result = mock.Instance.Add(1, 2);

Assert.Equal(42, result);

Assert.Single(mock.CallsTo(x => x.Add(1, 2)));If you already write tests in .NET, most of that should feel obvious.

You create a mock with Mock.Of<T>().

You configure behaviour with Setup(...).

You match arguments with Arg.*.

You query calls and assert with the test framework you already use.

That last point matters a lot to me. Burla supports Verify(...) and Times(...), and .Object is still there as a compatibility alias, but the native style is mock.Instance plus CallsTo(...) and normal assertions. I want the library to fit inside the test, not try to become the whole language of the test.

The main design bets#

Strict by default#

Unconfigured calls throw.

I want that behaviour. When I am reading a test, missing setup should fail loudly instead of quietly returning whatever default happened to fit the type. Loose-by-default looks friendly right up until it hides the exact bug the test was meant to catch.

You can still opt into loose behaviour when it makes sense, but strict-by-default catches mistakes early, especially during migration work where a missing setup usually means the conversion is incomplete.

Async should feel boring#

Modern .NET code is full of Task, ValueTask, and IAsyncEnumerable. A mocking library should not make those cases feel exotic.

If the happy path is clean but async needs helper archaeology, the API is only pretending to be simple. Any library can look elegant until IAsyncEnumerable walks into the room.

Verification should look like the rest of the test#

I generally prefer treating calls as data and asserting on them with the test framework directly:

var saveCalls = mock.CallsTo(x => x.Save(Arg.Any<User>()));

Assert.Single(saveCalls);I have very little patience for verification APIs that read like a ritual. Keeping the test inside the assertion tools you already know makes reviews easier, and it stops the mock library from trying to become a second language inside the test.

Migration should be a product feature#

This is the part I think the ecosystem still underestimates.

Teams revisit these choices. They inherit test suites, merge styles, outgrow old habits, and sometimes want to move without freezing delivery for a month. That is why Burla ships with migration guides from Moq and NSubstitute, plus a dedicated LLM migration reference.

Compatibility sugar such as .Object, It.*, SetupGet(...), and Verify(..., Times...) exists for that same reason. I do not think a new library earns points by making migration artificially painful just to prove ideological purity.

Inconsistent APIs tax both humans and LLMs#

This is probably the most current design pressure on Burla.

When an API has one naming pattern for one case, a different matcher story for another, and a third verification dialect for edge cases, humans slow down and AI tools get unreliable. The exact same inconsistency tax hits both.

That does not mean every library now has to be “for AI” as a marketing slogan. It means consistency is worth more than people used to think, because the cost of inconsistency now shows up in humans, reviews, migrations, and AI-assisted rewrites all at once.

How I would evaluate it#

If you want to try Burla, my advice is simple: do not start with the easiest happy-path mock in your suite.

Start with one awkward test.

- Pick a test that uses async heavily, or one with noisy verification.

- Migrate that first.

- Compare readability after the move, not just line count.

- Only scale up once the new shape feels better in reviews.

Pro tip: if a mocking library only looks good on tiny synchronous examples, you still do not know whether it solves the real problem.

The point of the whole thing#

Burla is opinionated because I think test tooling is better when it picks a lane.

I am not trying to build the largest possible mocking surface. I am trying to make the painful path less painful: migrations, awkward tests, noisy diffs, and the increasingly common case where some part of the work is being reviewed, translated, or generated with AI help. If a mocking library only shines in demos, I do not find that very interesting.

If that sounds useful, the best places to start are the documentation, the Moq migration guide, the NSubstitute migration guide, and the LLM migration reference.

Happy coding!